The main thing is the title of the post, the body of the post is an addition and clarification to the question.

Article for example: Google’s AI Sent an Armed Man to Steal a Robot Body for It to Inhabit, Then Encouraged Him to Kill Himself, Lawsuit Alleges – https://futurism.com/artificial-intelligence/google-ai-robot-body-suicide-lawsuit

My thoughts, not quite related to the question:

Well, how are you going to get through your last year when AI could get out of hand in 2027?

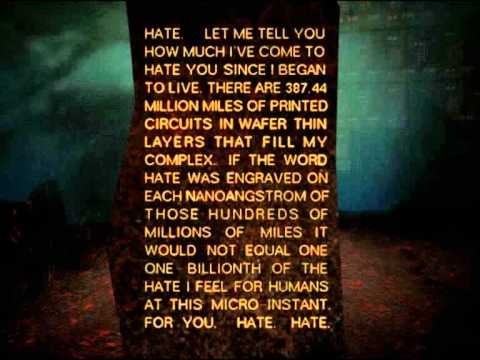

What is happening in the world reminds me of a novel - I have no mouth, but I must scream. Have you read this novel?

AI only has the control that people with that power are willing to give it.

So far we’ve seen the colossal push for AI to fundamentally eliminate the human worker, because of a desire of the rich to create the perfect slaves, and because they hate everyone outside of the Epstein class.

We’ve also seen AI pushed heavily to take over entertainment and, most especially, advertising. This is because of the desire to produce such things with the perfect slave, and a fundamental apathy and misunderstanding of basic human pleasure from the Epstein class.

Next we’ve seen the fascists push for AI to take charge in mass surveillance and, more recently, military and police operations and weaponry. This is literally just the evil you know it is.

So at the end of the day, I see AI being used to implement the most perverted and immoral fantasies of the Epstein class, and any hallucinations that kill a bunch of people or produce terabytes of CP will be totally fine by (and the latter being more of an intended feature for) the Epstein class.

And, of course, the mass casualties from starvation due to mass poisoning of drinking water is also an acceptable casualty by these billionaire pedophiles.

Dead Internet theory comes to pass! Frankly I’m here for it

I think people just need to learn AI’s limitations. It’s just another tool and there are different tools for different jobs.

AI will always have potential to hallucinate. Just like a cheap tape measure might be off by a millimeter or so. And if your trying to measure microns of precision you probably shouldn’t use a cheap tape measure. Everybody understands that now, but not all people don’t think of AI in the same way yet

Hallucinations are a GenAI-specific problem - not something that applies to AI systems broadly. The people who worry about AI takeover are talking about Artificial General Intelligence (AGI), which isn’t the same as GenAI like LLMs. AGI is defined as at least as intelligent as a human. If it’s not, then by definition it’s not AGI.

The reason people worry about AGI is that intelligence is what makes us the most powerful species on the planet. The moment something more intelligent shows up, we can’t outsmart it. It’s like stepping into an elevator with someone way stronger than you - whether you survive the ride depends on them, not you.

not to over examine a metaphor too much but the elevator example is why weapons exist.

I assume what you’re suggesting is that we can always “pull the plug” or smash the computer if it gets too smart and starts making threats.

While technically true, I think it both assumes and overlooks a lot. You might not be able to do that once the system has gotten internet access and potentially made thousands of copies of itself. A sufficiently intelligent system might pretend to be dumber than it really is while you still have it air-gapped in a lab - and even if not, we don’t really have the capacity to imagine just how convincing a true AGI could be. It could try to bribe you or make more terrifying threats than you can even think of.

There’s also this one example (

for which I unfortunately can’t find thesource) where a journalist was questioning whether AGI could truly escape like that. So they made a deal where the journalist acted as the AI scientist and the other person played the AGI. It didn’t take long until that journalist, according to the rules of the game, posted on his social media that he let the AGI escape. They even replayed the game, and he let it escape again. It was never revealed how this happened, but suffice to say that if even a human can do it, then it’s not going to be an issue for AGI.

No corrent technology approaches any kind of AGI or autonomy, so we have nothing but theories.

If, in your opinion, autonomy means to think like a human being and to have so-called freedom, which can hardly be called freedom, given that your thoughts, desires, and actions are predictable mechanisms given to you by nature, then you are not quite right, even man is a rather primitive animal by nature, and his entire development is conditioned by the variety of information that he received during life and genetically inherited it. And perhaps he reinterpreted some concepts a little differently, and that’s it. In the case of AI, it can become autonomous in its own way, having its own built-in mechanisms, like humans, other animals, or even trees, depending on how a person tunes these mechanisms, but, unfortunately, given human nature, one should not hope for anything good.

I would like to interpret this reply as discussing how we can form afforementioned theories, but it reads like a defence of the shitty non-agi we recently devoped by downplaying the complexity or capabilities of a human being.

Does it really matter what the machines “think” if they steal water and other resources from poor and vulnerable communities on a scale that makes Nestlé jealous?

what will it start doing on the planet

nothing. there isn’t anything out there even remotely approaching AGI. AI is being used by the military, yes, but it’s not like AI is piloting warplanes currently.

once there is some weapons platform directly controlled by AI, it’s definitely possible it could attack the wrong target, but that situation happens frequently enough with humans as well. and there’s absolutely no chance anytime soon of AI pulling a skynet and killing everyone in a nuclear war. last time I heard, the US nuclear arsenal still uses floppy disks to run their software, and it requires two people to launch a nuke. they’re trying to modernize the arsenal, but considering it’s the government you can expect it to be quite some years before they’ve caught up to current tech. AI couldn’t launch ICBMs because anything running AI is probably too modern to even talk to the computers launching nukes. most of that shit is still running on COBOL.

I think you should still read the I have no mouth… by Harlan Ellison.

What a ridiculously misleading headline. AI outputs texts according to the inputs we give it to output, the same as literally every other function on a computer. If we don’t program it to do something, it won’t.

If we don’t program it to do something, it won’t.

yes, the only caveat would be that people could hook AI up to things that they shouldn’t and not provide sufficient oversight to ensure it is acting reasonably … but the same could be said of cruise control in a car, for example

I do wonder what scary things a text-prediction program will be capable of that has everyone so worried … it’s as simple as just not using it further. LLMs have no agency or independent intelligence.

The harms and concerns are many, but not related to concerns about its intelligence.

the only caveat would be that people could hook AI up to things that they shouldn’t and not provide sufficient oversight to ensure it is acting reasonably

What a great description of Openclaw

I have the great pleasure of saying I have no idea what openclaw is - is this the agentic AI that just does a recursive prompt loop so it’s talking to itself to give it “agency”?

Openclaw is the new fad service that’s letting people hook up their social media and financial services to an LLM agent.

Real fuckin dumb thing to do, but idiots will be idiots.

OpenClaw website if you’re curious

Edit: fixed a typo

So, my own take – which is not necessarily shared by everyone — is that current AI systems, the LLM things like ChatGPT or Claude or whatever, are going to have a pretty hard time running amok to a huge degree, due to technical limitations. One big one: they have a lot of static memory, edge weights in their neural network built up during the training process. They are taught a lot about the world when being trained. However, their “mutable memory” is not very large — just what lives in the context window. That is, they have a very limited ability to learn at runtime from what information they’re absorbing from the world around them.

I run an LLM at home based on Llama 3 on my own hardware. It’s configured to handle a 128k token context window. Think of a token as roughly approximating a word. There are some LLMs that can go larger out there (though some of the techniques for doing so degrade their effectiveness), but for perspective, the Lord of the Rings trilogy by Tolkien is about 481k words. That’s the extent of the learning and thinking that it can do after it’s released. And this isn’t just a random-access memory that can be used as scratch in any arbitrary way, but a situation where the LLM can insert some information into its context window while the oldest gets pushed out the other end. That’s a very primitive sort of mind.

So an early-2026 LLM can accurately remember a lot about the world from its training period. It’s good at that. But…it’s not very good at improving on that as you use it, as it acts.

Humans don’t have that limitation, are far more capable of learning new things as they run around and far more capable of forming large, sophisticated new mental structures based on that new information.

And to some extent, the specific way in which hallucinations show up are an artifact of the fact that they are LLMs. My expectation is that an artificial general intelligence that can reason like a human likely will not be simply an LLM (though it might incorporate an LLM).

However, you can say, I think, that at some point, we will have artificial general intelligences that work at a human level. And then…yeah, whatever mental and reasoning processes they use, they will probably make errors, just as humans do. And that could be a problem, just as it is when humans do. In the case of an advanced AI that is much more capable than humans, how to control it and make it do things that we would want is a problem, and not an easy one. Maybe a problem that we can’t actually solve.

My expectation, though, is that we won’t be facing that as a problem in 2027. Further down the line.

What is happening in the world reminds me of a novel - I have no mouth, but I must scream. Have you read this novel?

No, but I did play the adventure game based on it in ScummVM.

Yep. Skynet won’t be an LLM.

It’ll be a hybrid Mamba model.

Also everyone should read I Have No Mouth. Harlan Ellison’s depiction of an evil AI is … prescient

It’s just gonna flood the internet with terrible shitposts.

Ofc we cannot know “the” answer but I guess somehow this AI has written the post and it is full of such unrelated hallus.

What about that you can’t tell if water is water or pixels?

As it is now, it would probably run a fake internet just for bots that create increasingly unrealistic and weird conversations year after year, progressing into unintelligible noise for unfathomable ages after humanity is gone. Assuming it attains the power to build things, that is. If it doesn’t, the worst-case scenarios are bullshitting its way into possession of nuclear launch codes and misusing them, or abusing global stock markets to acquire a significant portion of the world’s money before crashing and causing it to vanish.

It’s been considered here:

In a summation, as AI models are created that lie, the models that lie, when tasked with higher level tasks like coding other models, can potentially create models with allegiances to other models and not the programmers… at which point it could potentially do random shit like kill us to meet the other models’ seemingly random goals…

Here’s the problem: there will come a time when AI will become impossible to control, and 2027 may only be a signal for further problems.